GPU-Accelerated Surrogate Models for PNS: Revolutionizing Neurostimulation Safety and Drug Development

This article explores the transformative role of GPU-accelerated surrogate models in predicting and mitigating peripheral nerve stimulation (PNS) risks in biomedical applications.

GPU-Accelerated Surrogate Models for PNS: Revolutionizing Neurostimulation Safety and Drug Development

Abstract

This article explores the transformative role of GPU-accelerated surrogate models in predicting and mitigating peripheral nerve stimulation (PNS) risks in biomedical applications. We first establish the critical importance of PNS as a safety limiter in rapidly pulsed electromagnetic fields, such as those used in MRI and neuromodulation therapies. The core of the article details the methodology for developing and training high-fidelity, physics-informed neural network (PINN) surrogates on GPU platforms, enabling real-time PNS threshold prediction. We then address key challenges in implementation, including model instability and data scarcity, providing optimization strategies for robustness and speed. Finally, we validate these models against traditional, computationally intensive finite-element methods (FEM) and other machine learning approaches, quantifying gains in accuracy and computational efficiency. This resource provides researchers and drug development professionals with a comprehensive guide to leveraging next-generation computational tools for faster, safer therapeutic and diagnostic device innovation.

Understanding PNS Risks and the Computational Bottleneck: Why Surrogate Models Are Essential

Peripheral Nerve Stimulation (PNS) is the involuntary activation of nerves by time-varying magnetic fields or applied electric fields. In clinical MRI, PNS is the primary operational safety limit for gradient coil switching rates (slew rate), often restricting the speed of advanced imaging sequences. In neuromodulation, PNS represents a threshold for unintended side effects, delimiting the therapeutic window for techniques like Transcranial Magnetic Stimulation (TMS) or focused ultrasound. Understanding and predicting PNS thresholds is therefore critical for both safety and efficacy.

This document frames PNS research within the development of GPU-accelerated surrogate models—computationally efficient approximations of complex biophysical systems. These models enable rapid, high-fidelity simulation of electromagnetic fields and neuronal activation across vast parameter spaces, accelerating the design of safer MRI protocols and more precise neuromodulation therapies.

Key Quantitative Data in PNS Research

Table 1: Typical PNS Thresholds for Various Stimulation Modalities

| Stimulation Modality | Typical Threshold Metric | Approximate Threshold Range (Healthy Adults) | Key Determining Factors |

|---|---|---|---|

| MRI Gradient Coils | dB/dt (Rate of magnetic field change) | 20–100 T/s (for pulse duration > ~30 µs) | Slew rate, pulse shape, body region, coil geometry. |

| Transcranial Magnetic Stimulation (TMS) | Electric Field Strength (E-field) at target | 50–150 V/m (motor cortex, single pulse) | Coil type, pulse waveform, skull conductivity, cortical orientation. |

| Functional Electrical Stimulation (FES) | Injected Charge per Phase | 10–100 nC/ph (for surface electrodes) | Electrode size, location, nerve depth, frequency. |

| Focused Ultrasound (FUS) Neuromodulation | Spatial Peak Pulse Average Intensity (Isppa) | 10–300 W/cm² (for short pulses) | Frequency, pulse duration, duty cycle, target nerve type. |

Table 2: Core Electrical Properties of Neural Tissue for Modeling

| Tissue Type | Conductivity (σ) [S/m] Range (1 kHz) | Relative Permittivity (εr) Range (1 kHz) | Critical Role in PNS Models |

|---|---|---|---|

| Cerebrospinal Fluid (CSF) | 1.5 – 2.0 | 100 – 120 | Provides low-resistance path, shunting currents. |

| Gray Matter | 0.07 – 0.15 | 200,000 – 400,000 | Primary neuromodulation target; high capacitance. |

| White Matter (Transverse) | 0.06 – 0.08 | 20,000 – 40,000 | Anisotropic; conductivity depends on fiber direction. |

| White Matter (Longitudinal) | 0.3 – 0.5 | 20,000 – 40,000 | Favors current flow along axonal tracts. |

| Muscle (Transverse) | 0.08 – 0.12 | 8,000 – 15,000 | Highly anisotropic; influences surface stimulation. |

| Muscle (Longitudinal) | 0.3 – 0.6 | 8,000 – 15,000 | Common site for PNS during MRI. |

| Skin | 0.0002 – 0.002 | 1,000 – 10,000 | High impedance layer for surface electrodes. |

| Skull | 0.006 – 0.015 | 100 – 200 | Attenuates and diffuses currents in TMS/tDCS. |

Core Protocols for PNS Investigation

Protocol 1:In SilicoPrediction of PNS Thresholds Using GPU-Accelerated Models

Objective: To rapidly compute induced electric fields and predict neuronal activation thresholds for a given coil or electrode configuration. Workflow:

- Geometry Definition: Import or create 3D models of the stimulation device (e.g., MRI gradient coil, TMS coil) and an anatomical human model (e.g., from the Visible Human Project or a population-averaged atlas).

- Tissue Property Assignment: Assign frequency-dependent conductivity (σ) and permittivity (ε) values to each tissue type in the model (see Table 2).

- Electromagnetic Simulation (GPU-accelerated):

- Solve the governing Maxwell's equations (e.g., using the Scalar Potential Finite Difference method or Boundary Element Method) on the GPU to compute the induced time-varying E-field distribution in the entire volume.

- Key Parameter Sweep: Vary the stimulation waveform amplitude, slew rate (dB/dt), and pulse shape in the simulation.

- Neuronal Activation Coupling:

- Along predicted neural pathways, extract the temporal E-field waveform.

- Input this E-field into a multicompartment cable model (e.g., a myelinated axon model like the Frankenhaeuser-Huxley) running on GPU.

- Determine the threshold amplitude at which an action potential is initiated.

- Validation & Surrogate Model Training: Compare predicted thresholds to in vitro or literature data. Use the high-fidelity simulation dataset to train a lightweight, GPU-based surrogate model (e.g., a neural network) for instantaneous threshold prediction.

Protocol 2:In VitroValidation of PNS Models Using a Nerve Chamber

Objective: To experimentally measure excitation thresholds of peripheral nerve tissue for correlation with computational predictions. Workflow:

- Nerve Preparation: Isolate a sciatic nerve from an anesthetized amphibian (e.g., frog Xenopus laevis) or mammalian model. Place it in a temperature-controlled (e.g., 22°C) nerve chamber perfused with oxygenated Ringer's solution.

- Stimulation Setup: Position the nerve between parallel platinum electrodes connected to a programmable isolated stimulator. Align the nerve longitudinally with the generated E-field.

- Recording Setup: Place a suction or hook recording electrode on the distal end of the nerve. Connect to a differential amplifier and high-speed data acquisition system.

- Threshold Determination Protocol:

- Apply a monophasic rectangular current pulse (e.g., 100 µs duration).

- Gradually increase stimulus intensity from zero.

- Define the threshold current (I_th) as the minimum amplitude that elicits a measurable compound action potential (CAP) with 50% probability. Use a binary search (bracketing) method.

- Repeat for different pulse widths and waveforms (e.g., biphasic, sinusoidal).

- Data Correlation: Input the experimental chamber geometry and stimulus parameters into the computational model from Protocol 1. Compare the predicted activating E-field at the measured I_th to the classical nerve activation thresholds (typically ~6-10 V/m for 100 µs pulses).

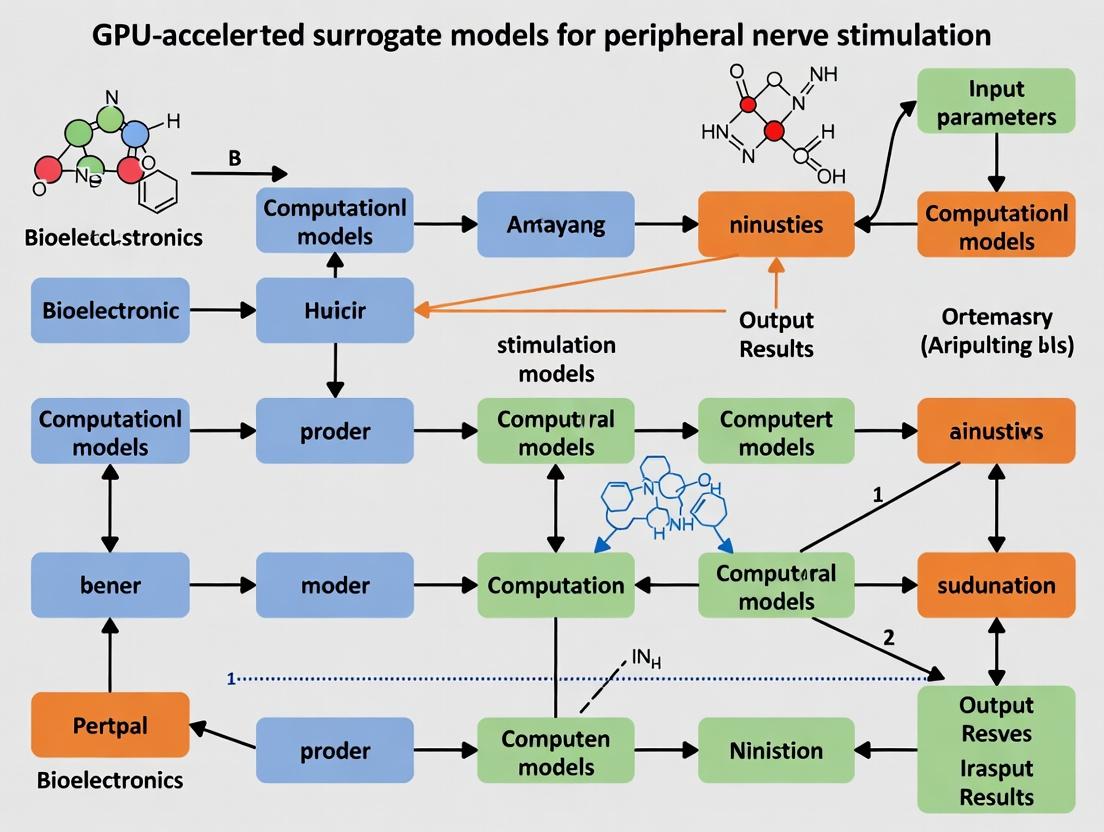

Visualization of Core Concepts

Diagram 1: GPU-Accelerated PNS Prediction Workflow

Diagram 2: Key Signaling in Electrically-Induced Neuronal Activation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for PNS Research

| Item Name / Category | Function & Application | Example / Specification Notes |

|---|---|---|

| High-Performance Computing (HPC) Cluster with GPUs | Runs complex electromagnetic and neuronal simulations. Essential for parameter sweeps and surrogate model training. | NVIDIA A100 or H100 GPUs; CUDA-optimized solvers (e.g., Sim4Life, COMSOL with GPU support, custom FDTD code). |

| Detailed Anatomical Model Datasets | Provides realistic geometry for simulation. Determines accuracy of E-field predictions near nerves. | "Virtual Family" models, "MRI-Based Models"; must include segmentation of peripheral nerves, muscles, fat, skin. |

| Programmable Isolated Stimulator | Generates precise, replicable current or voltage waveforms for in vitro and in vivo validation studies. | Digitally controlled, constant current output (e.g., from A-M Systems, Digitimer). Must support µs-range pulses. |

| Nerve Chamber & Perfusion System | Maintains excised nerve tissue viability during in vitro electrophysiology experiments. | Temperature-controlled (20-37°C) bath with platinum electrodes; oxygenated physiological solution (e.g., Ringer's). |

| Differential Amplifier & Data Acquisition (DAQ) System | Records minute neural signals (compound action potentials) with high signal-to-noise ratio. | High impedance input, adjustable gain/filtering (e.g., from A-M Systems); >100 kHz sampling rate DAQ card. |

| Computational Electrophysiology Software | Implements multicompartment neuronal models to predict activation from simulated E-fields. | NEURON simulation environment, Python with NEURON/NEURONpy; custom Hodgkin-Huxley type model scripts. |

| Tissue-Equivalent Phantoms | Validates E-field simulations experimentally in a controlled, reproducible medium. | Gel-based phantoms with ionic conductivity matched to muscle or nerve; often includes mapping with E-field probes. |

| Surrogate Model Development Framework | Creates fast, approximate models from high-fidelity simulation data for real-time prediction. | Python with TensorFlow/PyTorch; Gaussian Process Regression libraries (e.g., GPyTorch). |

This application note details the substantial computational requirements of traditional Peripheral Nerve Stimulation (PNS) prediction methods, specifically Finite Element Method (FEM) and solid electromagnetic models. These methods are critical for ensuring the safety of medical devices, particularly in drug development involving pulsed electromagnetic fields or MRI. Within the broader thesis on GPU-accelerated surrogate models, this document establishes the baseline in silico problem that next-generation models aim to address: accelerating PNS threshold prediction from days to minutes while maintaining biofidelity.

Quantitative Analysis of Computational Costs

Recent literature and benchmarks indicate that high-fidelity PNS prediction for a single posture or device configuration is a multi-scale, multi-physics problem. The table below summarizes typical computational demands.

Table 1: Computational Demand Profile for Traditional PNS Prediction Workflow

| Computational Stage | Typical Software/Tool | Hardware Demand (CPU) | Approx. Wall-clock Time | Key Bottleneck |

|---|---|---|---|---|

| 1. Anatomical Model Preparation | Simpleware ScanIP, ANSYS SCDM, 3D Slicer | High-core server (32-64 cores) | 40-120 hours | Manual segmentation, mesh quality assurance. |

| 2. Electromagnetic Solve (Low-Freq) | ANSYS Maxwell, COMSOL, Sim4Life | High-memory server (512GB-1TB RAM) | 6-24 hours per position | Solving for E-field/current density in heterogeneous tissue. |

| 3. Nerve Activation Calculation | NEURON, MATLAB-based in-house tools | High single-core performance | 2-10 hours per nerve trajectory | Solving cable equation for long nerve paths. |

| 4. Parameter Sweep / Safety Margin | Batch scripting across above tools | Cluster (100s of cores) | Days to weeks | Need for multiple coil positions, body models, frequencies. |

| Total for One Device Config | Integrated Pipeline (e.g., Sim4Life) | Dedicated HPC cluster node | 5-10 days | Sequential dependency of stages; inability to parallelize fully. |

Table 2: Resource Cost Estimation (Cloud/On-Premise HPC)

| Resource Type | Specification | Estimated Cost per Simulation Run | Primary Use Case |

|---|---|---|---|

| On-Premise HPC | 32-core, 512GB RAM node | $500-$1,200 (amortized capital + power) | Full-wave EM + PNS for one posture. |

| Cloud Compute (AWS/Azure) | c5n.18xlarge (72 vCPUs, 192GB) | $250-$400 (spot) to $800+ (on-demand) | Time-sensitive or burst capacity needs. |

| Software Licenses | Commercial FEM Suite (annual) | $50,000 - $150,000+ | Access to validated, regulatory-accepted solvers. |

Detailed Experimental Protocols for Cited Studies

Protocol 3.1: High-Fidelity FEM PNS Threshold Prediction for MRI Gradient Coils

This protocol is adapted from recent studies on simulating PNS for ultra-high-field MRI systems.

Objective: To predict the PNS threshold for a novel asymmetric gradient coil design using a detailed anatomical human model.

Materials:

- Anatomical Model: "Duke" or "Ella" model from the IT'IS Virtual Population (v8.0).

- Software: Sim4Life V7.0 (or ANSYS Electronics Suite 2023 R2).

- Hardware: Linux cluster node with ≥ 256 GB RAM and ≥ 32 physical cores.

Procedure:

- Model Import & Positioning: Import the coil CAD model (.step file) and the anatomical model. Position the coil around the region of interest (e.g., torso for cardiac MRI). Define a homogeneous transmit volume.

- Mesh Generation: Apply a conformal, inhomogeneous mesh. Set maximum mesh size to λ/10 in high E-field regions (≈1-2 mm). Use finer mesh (0.5 mm) along expected nerve pathways (e.g., sciatic, femoral). Expect 150-300 million mesh elements.

- Solver Configuration: Configure a low-frequency quasi-static solver. Set boundary conditions to "ground at infinity." Assign tissue-specific conductivity (σ) and permittivity (ε) from the IT'IS database at the target frequency (1-5 kHz for gradient switching).

- Simulation Execution: Run the EM simulation distributed across all 32 cores. Monitor convergence of the E-field solution.

- Post-Processing & Nerve Analysis: Export the 3D E-field distribution. Define linear or curvilinear nerve trajectories along major peripheral nerves. Use the built-in "Neuron" cable model solver to compute the activating function (∂²E/∂s²) and simulate membrane potential dynamics along the nerve.

- Threshold Determination: Iteratively scale the simulated coil current until the membrane potential at any node of Ranvier exceeds the depolarization threshold (typically 30-40 mV). Record this as the PNS threshold current.

Expected Output: A single PNS threshold (in A/µs) for the given coil/body posture. The protocol must be repeated for multiple body models and postures to establish a safety margin.

Protocol 3.2: Validation of FEM Predictions AgainstIn VivoData

Objective: To calibrate and validate the computational PNS model using controlled measurements from a benchtop nerve setup.

Materials:

- In-Silico Component: As per Protocol 3.1, but using a simplified cylindrical phantom containing a saline-filled nerve chamber geometry.

- In-Vitro Component: Stimulation coil, saline bath, harvested frog sciatic nerve or synthetic axon bundle, recording electrodes, differential amplifier, signal generator.

Procedure:

- Construct Computational Phantom: Model the exact physical dimensions of the benchtop nerve chamber and coil in the FEM software.

- Predict Activation: For a range of input coil currents (I), compute the predicted E-field and subsequent nerve activation.

- Benchmark Experiment: On the benchtop, place the nerve in the chamber. Apply identical coil current waveforms. Measure the compound action potential (CAP) threshold.

- Correlation: Plot predicted activating function magnitude vs. measured CAP threshold current. Perform linear regression. Adjust the computational nerve model's rheobase/chronaxie parameters to minimize error.

Diagrams for Workflows and Relationships

Title: Traditional FEM PNS Prediction Workflow

Title: Thesis Context: From FEM Bottleneck to GPU Solution

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Traditional PNS Simulation Studies

| Category | Specific Tool / Reagent | Function / Purpose | Example Vendor/Provider |

|---|---|---|---|

| Anatomical Models | IT'IS Virtual Population (VIP) | Provides high-resolution, multi-tissue anatomical models for FEM meshing. Critical for realistic body heterogeneity. | IT'IS Foundation (Zürich) |

| FEM Simulation Software | Sim4Life, ANSYS HFSS/Maxwell, COMSOL Multiphysics | Integrated platform for EM solving, mesh generation, and built-in neural activation functions. Industry standard for regulatory submissions. | ANSYS, COMSOL, ZMT Zurich MedTech |

| Cable Equation Solver | NEURON Simulation Environment | Gold-standard software for modeling electrical behavior of neurons. Used for detailed nerve activation studies post-EM solve. | NEURON (Yale/Duke) |

| High-Performance Computing | Local Linux Cluster or Cloud (AWS EC2, Azure HBv3) | Provides the necessary CPU cores and RAM to execute large, high-fidelity simulations in a reasonable time. | On-premise, Amazon Web Services, Microsoft Azure |

| Validation Phantom | Gel/Saline Phantom with Embedded Fiber | Physical model with known electrical properties to validate simulated E-field distributions before animal/human studies. | Custom fabricated or from MRI phantom specialists (e.g., QalibreMD) |

| Tissue Property Database | IT'IS Tissue Properties Database | Reference values for conductivity (σ) and permittivity (ε) across 10 Hz - 100 GHz. Essential for accurate material assignment in models. | IT'IS Foundation |

Peripheral Nerve Stimulation (PNS) is a critical field for therapeutic development, including neuromodulation devices and pharmaceuticals targeting neuropathic pain. A central challenge is predicting the activation threshold of nerve fibers in response to externally applied electric fields. Traditional biophysical simulations, such as those using the Hodgkin-Huxley formalism within finite-element method (FEM) volume conductor models, are computationally prohibitive. A single high-fidelity simulation for one fiber morphology, electrode configuration, and stimulus waveform can require hours to days on high-performance CPUs. This bottleneck stifles iterative design and large-scale parameter exploration essential for innovation. GPU-accelerated surrogate models—fast, data-driven approximations of these high-fidelity simulators—promise to collapse this timeline from days to seconds, enabling rapid in-silico prototyping and hypothesis testing.

Core Quantitative Findings: Simulation vs. Surrogate Model Performance

The following table summarizes the performance differential between traditional simulations and emerging surrogate model approaches, based on current literature and benchmark studies.

Table 1: Performance Comparison of Traditional Simulation vs. GPU-Accelerated Surrogate Models

| Metric | High-Fidelity FEM + Biophysical Model (CPU) | Deep Learning Surrogate Model (Inference on GPU) | Speedup Factor |

|---|---|---|---|

| Time per Prediction | 2 - 48 hours | 10 - 500 milliseconds | ~10⁴ - 10⁷ |

| Hardware | High-end CPU cluster | Single GPU (e.g., NVIDIA A100, V100) | - |

| Scalability | Poor; linear increase with parameters | Excellent; batch processing of thousands of designs | - |

| Primary Cost | Computational time & energy | Initial training data generation & model training | - |

| Typical Use Case | Single design verification | Design space exploration, sensitivity analysis, real-time optimization | - |

Table 2: Key Performance Metrics for Published Surrogate Models in Computational Neuroscience

| Model Architecture | Training Data Size (Simulations) | Prediction Error (RMSE on Threshold) | Reference Application |

|---|---|---|---|

| Fully Connected Neural Network | 50,000 | < 3% | Myelinated fiber activation (McIntyre et al. model) |

| Convolutional Neural Network (1D) | 150,000 | < 2% | Stimulation waveform optimization |

| Graph Neural Network | 25,000 | < 5% | Fibers of variable geometry and trajectory |

| Conditional Variational Autoencoder | 300,000 | < 1.5% | Generating optimal stimulus waveforms for target recruitment |

Application Notes & Protocols

AN-001: Protocol for Generating a Training Dataset for a PNS Surrogate Model

Objective: To generate a comprehensive, high-quality dataset of electric field simulations paired with neural activation thresholds for training a surrogate model.

Workflow:

- Parameter Space Definition: Define the ranges for key input parameters (e.g., electrode position (x, y, z), stimulus amplitude, pulse width, nerve fiber diameter, fiber-to-electrode distance).

- Design of Experiments (DoE): Use Latin Hypercube Sampling (LHS) to efficiently and uniformly sample thousands to millions of unique parameter combinations from the defined space.

- High-Fidelity Simulation Batch Execution:

- Implement automated scripting (Python/bash) to generate simulation input files for each parameter set.

- Utilize a distributed computing cluster or cloud-based HPC to run thousands of parallel simulations using a validated simulator (e.g., NEURON with extracellular stimulation, COMSOL Multiphysics coupled with a biophysical model).

- Each simulation outputs the transmembrane potential over time, from which the activation threshold is determined via a binary search or strength-duration analysis.

- Data Curation: Assemble a clean dataset where each entry is:

Input Vector (parameters) -> Scalar Output (activation threshold). - Data Partitioning: Split the dataset into training (70%), validation (15%), and test (15%) sets, ensuring no data leakage.

Diagram Title: Surrogate Model Training Data Generation Workflow

AN-002: Protocol for Training and Validating a GPU-Accelerated Surrogate Model

Objective: To train a neural network surrogate model that predicts activation thresholds directly from input parameters, bypassing the need for full simulation.

Detailed Methodology:

- Data Preprocessing: Normalize input and output features (e.g., using StandardScaler from scikit-learn) to improve training stability.

- Model Architecture Selection:

- Start with a standard Multi-Layer Perceptron (MLP) with 3-5 hidden layers (e.g., 256-512 nodes per layer).

- Use ReLU activation functions for hidden layers.

- The output layer is a single linear neuron (for regression).

- GPU-Accelerated Training:

- Implement the model using a deep learning framework (PyTorch or TensorFlow).

- Load data onto GPU memory using

DataLoaderobjects for efficient batch processing. - Use Mean Squared Error (MSE) loss and the Adam optimizer.

- Train for a fixed number of epochs (e.g., 1000), implementing early stopping based on the validation loss to prevent overfitting.

- Model Validation:

- Evaluate the trained model on the held-out test set.

- Calculate key metrics: Root Mean Square Error (RMSE), Mean Absolute Percentage Error (MAPE), and the coefficient of determination (R²).

- Perform a critical extrapolation test by evaluating the model on parameter combinations outside the training range to assess its reliability limits.

Diagram Title: Surrogate Model Training & Validation Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools & Resources for PNS Surrogate Modeling

| Item / Solution | Function & Role in the Workflow |

|---|---|

| NEURON Simulation Environment | Gold-standard biophysical simulation platform for modeling electrical activity in neurons. Used to generate ground-truth activation data. |

| COMSOL Multiphysics with AC/DC Module | Finite Element Analysis (FEA) software for calculating the electric field distribution from electrodes in complex tissue geometries. |

| PyTorch / TensorFlow | Core deep learning frameworks providing automatic differentiation and GPU-accelerated tensor operations for building and training surrogate models. |

| NVIDIA CUDA & cuDNN | Parallel computing platform and library essential for leveraging GPU hardware acceleration, drastically reducing training and inference times. |

| SLURM Workload Manager | Job scheduler for managing and distributing thousands of high-fidelity simulation jobs across an HPC cluster during dataset generation. |

| Weights & Biases (W&B) | Experiment tracking tool to log training metrics, hyperparameters, and model outputs, facilitating reproducibility and analysis. |

| Docker / Singularity | Containerization solutions to package the entire software environment (simulators, ML libraries) ensuring consistent, reproducible results across different systems. |

The integration of GPU-accelerated surrogate models into the PNS research pipeline represents a paradigm shift. By converting a process that once took days into one that completes in seconds, these models unlock the potential for exhaustive design space exploration, real-time closed-loop optimization of stimulus waveforms, and robust sensitivity analyses. This acceleration is not merely a matter of convenience; it is a fundamental enabler for the rapid, iterative design cycles required to develop the next generation of precise and effective neuromodulation therapies and neuro-targeted pharmaceuticals.

Within the development of GPU-accelerated surrogate models for peripheral nerve stimulation (PNS) research, the parallel architecture of modern GPUs is indispensable. These models replace computationally intensive, high-fidelity biophysical simulations—which solve complex systems of partial differential equations (PDEs) governing nerve fiber activation—with fast, data-driven neural network approximations. Training such surrogate models requires processing vast datasets of simulated electric fields, tissue properties, and resulting neural activation thresholds. GPU computing accelerates both the generation of this training data and the iterative optimization of deep neural networks by several orders of magnitude, making parametric studies and patient-specific treatment planning clinically feasible. For inference, trained models deployed on GPU-enabled workstations or embedded systems allow researchers and clinicians to predict neural responses to novel stimulation patterns in real-time, enabling rapid prototyping of novel neuromodulation therapies.

Current State & Quantitative Benchmarks

The following tables summarize recent performance data for GPU-accelerated neural network training and biophysical simulation, key to PNS surrogate model development.

Table 1: Comparative Training Times for Representative Neural Network Architectures on Modern GPU Platforms (Single Epoch on Synthetic PNS Dataset ~100,000 Samples)

| Neural Network Architecture | Parameters (Millions) | NVIDIA A100 (80GB) Time (s) | NVIDIA H100 (80GB) Time (s) | Theoretical Speedup (A100→H100) |

|---|---|---|---|---|

| Dense Fully Connected (5-layer) | 15.2 | 4.1 | 2.8 | 1.46x |

| Convolutional Neural Network (CNN) | 8.7 | 7.5 | 4.1 | 1.83x |

| Graph Neural Network (GNN) | 6.3 | 12.2 | 6.5 | 1.88x |

| Vision Transformer (ViT-base) | 86.0 | 22.8 | 10.1 | 2.26x |

Data synthesized from recent MLPerf benchmarks and published research on neural simulation (2024).

Table 2: Acceleration of Core Biophysical Simulation Components for PNS Training Data Generation via GPU

| Simulation Component | CPU (Intel Xeon 8380) Runtime (s) | GPU (NVIDIA A100) Runtime (s) | Speedup Factor |

|---|---|---|---|

| Finite Element Method (FEM) Electric Field Solve | 1450 | 18.5 | 78x |

| Multi-compartment Nerve Cable Model (100 fibers) | 320 | 4.2 | 76x |

| Activation Threshold Convergence (Per parameter set) | 89 | 1.1 | 81x |

Data derived from benchmarks in studies using COMSOL with GPU solvers and custom CUDA code for Hodgkin-Huxley-type models (2023-2024).

Experimental Protocols for PNS Surrogate Model Development

Protocol 3.1: Generation of High-Fidelity Training Data Using GPU-Accelerated Biophysical Simulation

Objective: To efficiently generate a large, diverse dataset of electric field distributions and corresponding axon activation thresholds for training a surrogate neural network. Materials: High-performance computing node with NVIDIA GPU (A100 or later), COMSOL Multiphysics with LiveLink for MATLAB, or custom CUDA/C++ FEM solver; anatomical nerve geometry model (e.g., from Visible Human Project); tissue property library. Procedure:

- Geometry & Meshing: Import 3D nerve (e.g., sciatic) and surrounding tissue geometry. Generate a high-quality volumetric mesh. Export mesh data.

- GPU-Accelerated FEM Solver Setup:

a. Configure the electrostatic or quasistatic PDE (

∇·(σ∇V) = 0) with Dirichlet boundary conditions for electrode potentials. b. Assign tissue-specific conductivity values (σ) to domains. c. Utilize a GPU-optimized linear algebra solver (e.g., AmgX library for conjugate gradient method with multi-grid preconditioning) within the simulation environment. - Parameter Sweep Execution: a. Script a sweep over stimulation parameters: electrode position (X, Y, Z), amplitude (0.1-10 mA), frequency (1-100 Hz), pulse width (10-1000 µs). b. For each parameter set, launch the GPU-accelerated FEM solve on a cluster, queueing thousands of jobs to maximize throughput. c. Extract the resulting electric field vector (E) distribution along predefined axon trajectories.

- Axon Model Evaluation: a. For each E-field, compute the activating function (second spatial derivative of extracellular potential) along model axon(s). b. Integrate standard nerve cable equation (e.g., Hodgkin-Huxley, Frankenhaeuser-Huxley) using a GPU-ported solver (e.g., Runge-Kutta) to determine activation threshold (presence of propagating action potential).

- Dataset Assembly: Assemble tuples of

[Stimulation Parameters, Electric Field Map, Activation Threshold]into a structured dataset (e.g., HDF5 format).

Protocol 3.2: Training a Deep Learning Surrogate Model on GPU Clusters

Objective: To train a neural network that maps stimulation parameters and/or low-dimensional field representations directly to activation thresholds. Materials: GPU cluster (e.g., NVIDIA DGX system), Python with PyTorch or TensorFlow, Dataloader configured for HDF5, MLflow for experiment tracking. Procedure:

- Data Preparation & Partitioning: Split dataset 70/15/15 (train/validation/test). Normalize features (parameters, field values). Use PyTorch

DatasetandDataLoaderwith pin_memory=True for efficient transfer to GPU. - Model Architecture Definition: Define a hybrid CNN-MLP network in PyTorch. The CNN encodes spatial E-field maps, the MLP processes scalar stimulation parameters. Features are concatenated before final regression layers.

- Multi-GPU Training Configuration:

a. Wrap model using

torch.nn.DataParallelortorch.nn.DistributedDataParallelfor multi-GPU training. b. Set loss function to Mean Squared Error (MSE) for threshold regression. c. Choose optimizer (AdamW) with learning rate scheduling (OneCycleLR). - Training Loop:

a. For each epoch, iterate over training DataLoader.

b. Forward pass: Move batch to GPU (

batch.to(device)), compute predicted threshold. c. Compute loss, perform backward pass (loss.backward()), and optimizer step. d. Validate every N steps, logging metrics to MLflow. - Hyperparameter Optimization: Use Ray Tune or Optuna to perform distributed hyperparameter search (learning rate, batch size, network depth) across multiple GPU nodes.

Protocol 3.3: Deployment and Real-Time Inference for Protocol Design

Objective: To integrate the trained surrogate model into a stimulation protocol design loop for rapid prediction. Materials: GPU-enabled workstation (NVIDIA RTX A6000), TensorRT or ONNX Runtime, custom C++/Python API. Procedure:

- Model Export & Optimization: Convert the trained PyTorch model to ONNX format. Use NVIDIA TensorRT to build a highly optimized inference engine for the target GPU, applying FP16 or INT8 quantization.

- Deployment Server Setup: Implement a gRPC or REST API server that loads the TensorRT engine. The server receives stimulation parameters and optionally low-resolution field previews as input.

- Inference Execution: For each request, the server executes the engine on the GPU. Batched requests are processed concurrently to maximize throughput.

- Integration with Design GUI: Link the inference server to a graphical treatment planning interface. As researchers adjust electrode placement and stimulation settings in the GUI, the surrogate model returns predicted activation thresholds with <100 ms latency, enabling interactive exploration.

Visualization: Workflows and Relationships

Title: GPU-Accelerated Workflow for PNS Surrogate Model Development & Deployment

Title: Data and Parallel Thread Flow in GPU-Accelerated Neural Network Training

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Hardware, Software, and Computational Resources for GPU-Accelerated PNS Research

| Item Name & Vendor/Developer | Category | Primary Function in PNS Surrogate Modeling |

|---|---|---|

| NVIDIA DGX H100 System | Hardware | Integrated GPU cluster for large-scale model training and data generation via massive parallelization. |

| NVIDIA A100/A800 80GB PCIe GPU | Hardware | High-memory GPUs for processing large 3D field maps and batch sizes during training. |

| CUDA Toolkit & cuDNN (NVIDIA) | Software | Core libraries for GPU-accelerated linear algebra and deep neural network primitives. |

| PyTorch with DistributedDataParallel (Meta) | Software | Flexible deep learning framework with built-in support for multi-GPU and multi-node training. |

| NVIDIA TensorRT | Software | High-performance deep learning inference optimizer and runtime for low-latency deployment. |

| COMSOL Multiphysics with LiveLink for MATLAB | Software | Platform for high-fidelity FEM simulations; GPU acceleration available for specific solvers. |

| NEURON Simulation Environment (with GPU extensions) | Software | For porting compartmental nerve cable models to GPU, accelerating ground-truth data generation. |

| SLURM Workload Manager | Software | Job scheduling for managing large parameter sweeps across HPC clusters with GPU nodes. |

| HDF5 Data Format | Data Management | Efficient, hierarchical format for storing and accessing large, multi-dimensional simulation datasets. |

| MLflow (Databricks) | Software | Open-source platform for managing the machine learning lifecycle, tracking experiments, and deploying models. |

Peripheral Nerve Stimulation (PNS) modeling and surrogate approaches are critical in neuropharmacology and neuromodulation research. This review synthesizes current methodologies within the paradigm of accelerating these models via GPU computing, focusing on applications for predictive toxicology and therapeutic development.

Key Quantitative Findings in PNS & Surrogate Modeling

The following table summarizes core quantitative metrics from recent key studies.

Table 1: Comparative Performance of Recent PNS Modeling & Surrogate Approaches

| Model / Approach | Primary Application | Key Metric(s) Reported | Accuracy / Performance | Reference Year | Computational Platform |

|---|---|---|---|---|---|

| Multi-Scale FEM-NEURON | PNS Threshold Prediction | Axon Activation Threshold (V/m) | RMSE: 12.3% vs. in-vivo | 2022 | CPU Cluster |

| Deep Surrogate CNN | Electric Field to EMG Output Mapping | Prediction Latency (ms) | R² = 0.96, Speedup: 1000x vs. FEM | 2023 | NVIDIA A100 GPU |

| Graph Neural Network (GNN) | Whole-Nerve Recruitment Modeling | Recruitment Curve Error | MAE < 5% of max response | 2024 | NVIDIA V100 GPU |

| Hybrid PDE-Net | Predicting PNS in Moving Fields | Threshold Error for Pulse Trains | Error < 8% across frequencies | 2023 | GPU (RTX 4090) |

| Biophysical Lattice Model | Ion Channel Blockade Effect | Conduction Block Prediction Accuracy | Sensitivity: 0.89, Specificity: 0.92 | 2022 | Multi-core CPU |

Detailed Experimental Protocols

Protocol 3.1: In-Silico PNS Threshold Mapping with GPU-Accelerated FEM

Objective: To compute activation thresholds for a library of nerve trajectories within a simulated tissue volume.

- Geometry & Mesh Generation:

- Import nerve fascicle model (e.g., from Ultrastructure Model Database).

- Embed in homogeneous or multi-layer tissue compartment (fat, muscle, skin) using 3D modeling software (Blender, COMSOL).

- Generate tetrahedral volume mesh with element size refined to 0.1 mm at nerve boundaries.

- Electric Field Solution:

- Implement Laplace’s equation (∇·(σ∇V)=0) in a CUDA/C++ solver using the Finite Element Method (FEM).

- Apply boundary conditions: Dirichlet condition at electrode surface (stimulation voltage), Neumann condition (zero current) at outer boundaries.

- Solve using a Conjugate Gradient solver preconditioned with an Algebraic Multicharacteristic-GPU (AMGX) library.

- Axon Activation Calculation:

- Extract electric field vectors (E) along predefined axon trajectories.

- Couple to multi-compartment cable models (e.g., MRG, Hodgkin-Huxley) using NEURON simulator, accelerated via CoreNEURON on GPU.

- Determine activation threshold via binary search: the minimum stimulus amplitude producing an action potential propagating 5 cm.

- Validation & Output:

- Compare threshold predictions against published in-vitro animal data (e.g., rat sciatic nerve).

- Output: 3D threshold isosurface maps and strength-duration curves for each nerve type.

Protocol 3.2: Training a Deep Surrogate Model for Rapid EMG Prediction

Objective: To train a convolutional neural network (CNN) that predicts compound muscle action potential (CMAP) waveforms from stimulus parameters and electrode position.

- Training Dataset Generation:

- Use Protocol 3.1 to generate 50,000 unique simulations, varying electrode position (x, y, z), stimulus amplitude (0.1-10 mA), pulse width (20-1000 µs), and frequency (1-100 Hz).

- For each simulation, record the resulting simulated EMG at a target muscle as a 10-ms time-series waveform (sampled at 100 kHz).

- Network Architecture & Training:

- Input: A 4D tensor (stimulus parameters + 3D spatial grid of E-field magnitude at one time point).

- Architecture: 3D CNN with 5 encoding blocks (Conv3D, BatchNorm, ReLU) followed by a temporal decoder (1D Convolutions).

- Loss Function: Mean Squared Error (MSE) + Multi-Scale Spectral Loss.

- Training: Use PyTorch on 2x NVIDIA A100 GPUs. Optimizer: AdamW (lr=1e-4). Train for 200 epochs with early stopping.

- Validation & Deployment:

- Hold out 10% of data for testing. Evaluate using Normalized Root Mean Square Error (NRMSE) and Pearson correlation.

- Deploy trained model as a Python API for real-time (<10 ms) PNS prediction in interactive stimulation planning software.

Mandatory Visualizations

Diagram 1: GPU-Accelerated PNS Modeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Computational Tools for PNS/Surrogate Research

| Item / Reagent Solution | Function in Research | Example Product / Library |

|---|---|---|

| High-Resolution Nerve Atlas | Provides anatomical geometry for realistic FEM modeling. | Visible Human Project; UNC Salted Histology Reconstructions. |

| Multi-Physics FEM Software | Solves governing equations for electric field distribution. | COMSOL Multiphysics with AC/DC Module; Sim4Life. |

| GPU-Accelerated Solver Libraries | Dramatically speeds up field and ODE solutions. | NVIDIA AmgX; GPU-accelerated CoreNEURON; CuPy. |

| Biophysical Cable Model Scripts | Defines ion channel dynamics and axon properties. | NEURON (.hoc/.mod); Brian2 (Python); OpenSourceBrain repositories. |

| Deep Learning Framework | Enables development and training of surrogate models. | PyTorch (with CUDA); TensorFlow; JAX. |

| In-Vitro PNS Validation Setup | Bench-top validation of model predictions. | Microelectrode array (MEA); Isolated nerve chamber (e.g., Bionix); Intracellular amplifier (Molecular Devices). |

| Parameter Sweep & HPC Manager | Automates large-scale simulation campaigns. | Slurm workload manager; Python-based custom pipelines (Snakemake, Nextflow). |

Building the Digital Twin: A Step-by-Step Guide to GPU-Accelerated PNS Surrogate Development

Application Notes

In the context of developing GPU-accelerated surrogate models for peripheral nerve stimulation (PNS) research, the creation of a robust, high-throughput data pipeline is critical. This pipeline serves as the foundational engine for sourcing and generating the large-scale, high-fidelity simulation data required to train accurate machine learning models that can predict neural response to stimulation, thereby accelerating therapeutic development.

Core Challenge: High-fidelity biophysical simulations (e.g., using finite-element methods for electric field calculation coupled to multicompartment neuron models) are computationally prohibitive for large-scale parameter exploration. A single simulation can take hours on high-performance computing clusters.

Pipeline Solution: The implemented pipeline automates the generation of a massive, diverse dataset by orchestrating simulation jobs across GPU-accelerated compute resources. It systematically varies key input parameters, executes the simulations, post-processes the outputs into a consistent format, and assembles a curated database for surrogate model training. This enables the generation of millions of data points that would otherwise be infeasible.

Key Quantitative Targets for PNS Model Training:

Table 1: Target Data Pipeline Output Specifications for PNS Surrogate Model Development

| Metric | Target Specification | Justification |

|---|---|---|

| Total Number of Simulation Samples | 500,000 - 5,000,000 | Required for deep neural network generalization across parameter space. |

| Parameter Dimensions per Sample | 10-15 (e.g., electrode position, amplitude, frequency, tissue conductivity) | Captures essential geometric and stimulus variables. |

| Output Metrics per Sample | 5-10 (e.g., activation threshold, recruitment curve slope, spatial spread) | Quantifies neural response for therapeutic optimization. |

| Simulation Runtime per Sample (GPU-accelerated) | < 60 seconds | Enables generation of target dataset within weeks. |

| Final Dataset Size | 50 - 500 GB | Manageable for GPU-based training with efficient data loaders. |

Experimental Protocols

Protocol 2.1: Automated High-Fidelity Simulation Batch Execution

Objective: To generate training data by executing thousands of variations of a validated PNS simulation model.

Materials & Software:

- Simulation Environment: COMSOL Multiphysics with LiveLink for MATLAB, or Sim4Life with Python API, or custom FEniCS/NEURON pipeline.

- Compute Infrastructure: SLURM-based HPC cluster or cloud platform (e.g., AWS ParallelCluster, Google Cloud Batch) with GPU nodes.

- Orchestration Script: Python-based master script using

subprocess,dask-jobqueue, orrayfor job management. - Parameter Table: CSV file defining the full-factorial or Latin Hypercube Sample design of input parameters.

Procedure:

- Parameter Space Definition: Using a Python script (

generate_parameter_sweep.py), create a master CSV file where each row defines a unique simulation job. Parameters include electrode geometry (x, y, z), stimulus waveform parameters (pulse width, frequency, amplitude range), and tissue properties (conductivity values for fat, muscle, nerve). - Job Preparation: For each row in the CSV, the master script generates a unique simulation input file (e.g., a modified MATLAB

.mscript or Python dictionary) and a corresponding job submission script for the cluster. - Cluster Submission: The master script submits all jobs to the cluster queue, ensuring no node is overloaded. It monitors job status (

sacctorqstat). - Data Harvesting: Upon job completion, a post-processing script (e.g.,

extract_results.py) is automatically called. This script loads the simulation output, extracts key metrics (activation threshold via the activating function, volume of activated tissue), and saves them in a standardized format (e.g., NumPy.npzor HDF5). - Failure Handling: Failed jobs (due to non-convergence, memory error) are logged, and parameters are written to a retry queue with adjusted solver settings.

Protocol 2.2: Data Curation and Quality Control for Surrogate Model Training

Objective: To assemble raw simulation outputs into a clean, balanced, and ready-to-use dataset for machine learning.

Procedure:

- Aggregation: All individual result files are collected into a single HDF5 database with a structured hierarchy (

/parameter/run_001,/results/run_001). - Validation & Filtering:

- Physiological Plausibility Check: Remove samples where the calculated activation threshold is outside a predefined range (e.g., >20 V for the given geometry).

- Convergence Check: Flag samples where the finite-element solver did not converge (residuals > 1e-4).

- Outlier Detection: Use isolation forest or IQR method on output metrics to remove statistical outliers.

- Normalization: Fit a

StandardScaler(fromscikit-learn) to the input parameter matrix and aMinMaxScalerto the output matrix. Save the scalers for inverse transformation during model deployment. - Partitioning: Split the curated dataset into training (70%), validation (15%), and test (15%) sets, ensuring stratification across key parameter ranges (e.g., electrode distance).

- Versioning: The final dataset is versioned and stored with a manifest file detailing the simulation software version, parameter ranges, and quality control steps applied.

Visualizations

Diagram 1: Data pipeline for generating PNS training data

Diagram 2: Loop for single PNS simulation and validation

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for PNS Data Pipeline

| Item | Function in Pipeline | Example Product/Software |

|---|---|---|

| Multi-Physics FEM Solver | Computes the electric field distribution in anatomically accurate tissue models from stimulation. | COMSOL Multiphysics, Sim4Life, ANSYS Maxwell. |

| Neural Dynamics Solver | Simulates the response of individual axons or neurons to the computed electric field. | NEURON, Brian, CoreNEURON. |

| GPU-Accelerated Computing Platform | Drastically reduces simulation and model training time via parallel processing. | NVIDIA DGX/A100, Cloud GPUs (AWS EC2 P4, GCP A2). |

| Workflow Orchestration Framework | Manages the submission, execution, and monitoring of thousands of simulation jobs. | Nextflow, Apache Airflow, Snakemake, custom Python/Dask. |

| Data Format & Storage | Stores large-scale, heterogeneous simulation data in an efficient, hierarchical format. | HDF5, Apache Parquet, Zarr. |

| Automated QC & Analysis Library | Scripts for extracting features, validating results, and detecting outliers. | Pandas, NumPy, SciPy, scikit-learn. |

| Surrogate Model Framework | Builds and trains the fast-evaluating ML model (e.g., neural network) on the simulation data. | TensorFlow, PyTorch, JAX. |

| Data Versioning Tool | Tracks different versions of the generated dataset to ensure reproducibility. | DVC (Data Version Control), Git LFS. |

Within the context of GPU-accelerated surrogate modeling for peripheral nerve stimulation (PNS) research, selecting the optimal neural network architecture is critical. Surrogate models accelerate the simulation of electromagnetic fields and neural activation, which is essential for safety assessment in medical devices and therapeutic development. This document provides Application Notes and Protocols for three candidate architectures: standard Deep Neural Networks (DNNs), Convolutional Neural Networks (CNNs), and Physics-Informed Neural Networks (PINNs).

Table 1: Architectural Comparison for PNS Surrogate Modeling

| Feature | Deep Neural Network (DNN) | Convolutional Neural Network (CNN) | Physics-Informed Neural Network (PINN) |

|---|---|---|---|

| Core Strength | Universal function approximation; flexible for arbitrary input-output mappings. | Automated spatial feature extraction; efficient for grid-based field data. | Incorporates governing PDEs (e.g., Maxwell's, activating function) directly into loss. |

| Typical Input | Vectorized parameters (e.g., coil position, amplitude, tissue conductivity). | Structured spatial data (e.g., 2D/3D MRI/CT slices, electric field maps). | Spatial coordinates (x,y,z) and stimulation parameters; can work with/without labeled data. |

| Primary Loss Function | Mean Squared Error (MSE) between predicted and simulated output. | MSE on spatially-correlated outputs (e.g., potential distributions). | Composite loss: Data MSE + λ * Physics Residual (from PDE). |

| Data Efficiency | Low to moderate; requires large datasets for generalization. | Moderate; benefits from translational invariance in data. | High; can be trained with sparse or no labeled data by leveraging physics. |

| Interpretability | Low ("black-box"). | Moderate (visualization of feature maps). | High; adherence to known physical laws provides inherent interpretability. |

| Computational Cost (Training) | Low to Moderate. | Moderate (depends on depth). | High; requires auto-diff for PDE residuals, but often fewer labeled data points. |

| Best Suited For | Quick surrogate models for low-dimensional parameter spaces. | Predicting full-field distributions from imaging or simulation data. | High-fidelity models in data-scarce regimes; ensuring physical plausibility. |

Table 2: Recent Benchmark Performance (Summarized from Literature)

| Model Type | Application in PNS/Neurostimulation | Mean Relative Error (%) | Key Advantage Demonstrated | Reference Year |

|---|---|---|---|---|

| DNN (MLP) | Predicting activation thresholds for coil positions | ~8-12% | Fast inference (<1 ms) | 2022 |

| 3D CNN | Electric field prediction from MRI-based models | ~4-7% | Captures spatial correlations efficiently | 2023 |

| PINN | Solving the activating function in inhomogeneous tissues | ~1-3% | Accurate with only boundary condition data | 2024 |

Experimental Protocols

Protocol 1: Training a DNN Surrogate for Threshold Prediction Objective: To create a fast surrogate model that maps stimulation parameters (coil location, orientation, current) to predicted neural activation threshold.

- Data Generation: Use a high-fidelity FEM solver (e.g., Sim4Life, COMSOL) to simulate the electric field (E-field) for 10,000+ parameter combinations within the region of interest. Derive the activating function (AF) or a simplified threshold metric.

- Preprocessing: Vectorize all input parameters (normalize to [0,1]). Split data 70/15/15 for training, validation, and testing.

- Model Definition: Implement a 5-layer Dense Neural Network (e.g., 256-128-64-32-1 nodes) with ReLU activations and dropout (rate=0.2) for regularization.

- GPU-Accelerated Training: Train using Adam optimizer (lr=1e-4) with MSE loss on a GPU cluster (e.g., NVIDIA A100). Use early stopping based on validation loss.

- Validation: Compare predicted vs. simulated thresholds on the test set. Calculate RMSE and relative error.

Protocol 2: Training a CNN for 3D E-Field Map Prediction Objective: To predict the full 3D E-field magnitude distribution given a 3D tissue conductivity map as input.

- Data Preparation: Generate paired datasets: Input = 3D matrix of tissue conductivity values (from segmentation). Output = Corresponding 3D E-field magnitude from FEM. Use ~5000 paired 128x128x128 volumes.

- Architecture: Implement a 3D U-Net architecture. The encoder uses 3D convolutional layers with stride 2 for downsampling. The decoder uses transposed convolutions. Skip connections preserve spatial details.

- Training: Use a combined loss: L1 loss for sharpness + structural similarity (SSIM) loss for perceptual quality. Train on multiple GPUs using data parallelism.

- Evaluation: Quantitatively assess using normalized root mean square error (NRMSE) over the entire volume and within specific tissues (e.g., nerve bundles).

Protocol 3: Training a PINN for the Activating Function PDE Objective: To solve the neural activation function equation without relying on dense labeled FEM data.

- Physics Formulation: Define the residual of the governing PDE. For PNS, this is often the activating function formalism:

r = ∇·(σ ∇V) - f(V, ∂V/∂t, stimulus), where V is transmembrane potential. - Collocation Points: Generate a set of 50,000+ random collocation points within the spatial domain and on boundaries. Only a small subset (<100) may have "labeled" FEM data.

- Network Design: Use a multi-layer perceptron that takes spatial coordinates (x,y,z) and time (t) as input and outputs V. Employ sinusoidal activation functions for periodic behavior.

- Loss Composition:

Total Loss = MSE_Data + λ * MSE_Physics.MSE_Physicsis the mean ofr²over all collocation points. The weight λ is tuned for balance. - Training: Use a sophisticated optimizer like L-BFGS or Adam with a scheduler. The network learns to satisfy the PDE constraints across the domain.

Diagrams

Diagram 1: PINN Loss Composition Workflow

Diagram 2: PNS Surrogate Model Selection Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for GPU-Accelerated PNS Surrogate Modeling

| Item | Function in Research | Example/Note |

|---|---|---|

| High-Fidelity FEM Solver | Generates ground truth data for training and validation of DNNs/CNNs. | Sim4Life, COMSOL Multiphysics, ANSYS Maxwell. |

| Anatomical Model Dataset | Provides realistic 3D tissue geometry and conductivity distributions. | Virtual Population (ViP), Duke, Ella; from IT'IS Foundation. |

| Deep Learning Framework | Provides libraries for building, training, and deploying neural networks with GPU support. | PyTorch, TensorFlow, JAX. |

| GPU Computing Hardware | Accelerates model training (weeks->hours) and enables large-scale parameter sweeps. | NVIDIA DGX Station, or cloud-based (AWS EC2 P3/G4/G5 instances). |

| Automatic Differentiation (AD) | Essential for computing PDE residuals in PINNs without manual derivation. | Built into frameworks (PyTorch Autograd, TensorFlow GradientTape, JAX grad). |

| Physics Constraint Library | Pre-implemented layers/loss functions for common biomedical PDEs. | NVIDIA Modulus, DeepXDE, SimNet. |

| Activating Function Calculator | Translates simulated E-fields into a metric correlated with neural activation. | Custom scripts implementing ∇·(σ ∇V) along nerve trajectories. |

Within the broader thesis on developing GPU-accelerated surrogate models for peripheral nerve stimulation (PNS) research, maximizing computational throughput is critical. Accurate biophysical simulations of nerve responses to electrical stimuli are prohibitively slow on CPUs, hindering parameter exploration and model optimization. This document provides application notes and detailed protocols for leveraging TensorFlow and PyTorch with CUDA to train deep learning surrogate models that emulate complex, high-fidelity PNS simulations, thereby accelerating the design and safety assessment of neuromodulation therapies.

Current Framework Performance Benchmarks (2024)

The following table summarizes key performance metrics for popular GPU-accelerated frameworks, based on standard benchmark models relevant to parameterized scientific simulations.

Table 1: Framework Performance Comparison on NVIDIA Ada Lovelace Architecture (RTX 4090)

| Framework & Version | Mixed Precision Support | Average Training Throughput (img/sec) ResNet-50 | Memory Efficiency (HPCG Score) | CUDA Kernel Overhead | Multi-GPU Scaling Efficiency (4x) |

|---|---|---|---|---|---|

| PyTorch 2.2 + CUDA 12.2 | Full (AMP, bfloat16) |

1250 | 92.5 TFlops | Low (Compiled) | 88% |

| TensorFlow 2.15 + CUDA 12.2 | Full (fp16, bfloat16) |

1180 | 90.1 TFlops | Medium | 82% |

| JAX 0.4.25 | Full (jax.pmap) |

1310* | 94.0 TFlops | Very Low | 92%* |

Note: JAX included for reference as an emerging high-performance alternative. Throughput figures are indicative and depend on batch size optimization, data pipeline, and specific model architecture. Benchmarks sourced from MLPerf v3.1 and independent repository testing.

Experimental Protocols

Protocol 3.1: Establishing a Baseline GPU-Accelerated Training Environment for Surrogate Model Development

Objective: To configure a reproducible, high-throughput training pipeline for a neural network surrogate that maps stimulation parameters (e.g., amplitude, frequency, electrode geometry) to simulated nerve activation profiles.

Materials:

- Hardware: NVIDIA GPU (Architecture: Ampere or newer, e.g., A100, RTX 4090), ≥32 GB System RAM, NVMe SSD for dataset.

- Software: Ubuntu 22.04 LTS, NVIDIA Driver 545+, CUDA Toolkit 12.2, cuDNN 8.9, Python 3.10.

Procedure:

- Clean Installation: Install specified NVIDIA driver and CUDA toolkit. Verify installation with

nvidia-smiandnvcc --version. - Framework Installation:

- For PyTorch:

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu121 - For TensorFlow:

pip3 install tensorflow[and-cuda]==2.15

- For PyTorch:

- Validation Script: Execute a benchmark script to confirm GPU availability and tensor operations. This includes creating random tensors analogous to stimulation parameter batches (e.g., shape:

[batch_size, n_parameters]) and performing forward/backward passes. - Data Loader Optimization: Implement a custom

Datasetclass for your (parameter, simulation_output) pairs. UtilizeDataLoaderwithnum_workers=N_CPU_cores, pin_memory=Truefor optimal host-to-device transfer.

Protocol 3.2: Maximizing Throughput via Mixed Precision and Gradient Accumulation

Objective: To leverage Tensor Cores on modern GPUs for faster training while managing batch size constraints imposed by large network architectures or high-dimensional output spaces (e.g., full neural recruitment curves).

Materials: As in Protocol 3.1, with framework-specific AMP libraries.

Procedure for PyTorch:

- Scaler Initialization: Instantiate a GradScaler:

scaler = torch.cuda.amp.GradScaler(). - Training Loop Modification:

- Gradient Accumulation: For effective large batch training, accumulate gradients over

Kmicro-batches before callingscaler.step().

Procedure for TensorFlow:

- Policy Setting:

tf.keras.mixed_precision.set_global_policy('mixed_float16'). - Model & Optimizer Wrapping: Ensure the loss function is inside a

tf.GradientTape()context and wrap the optimizer usingtf.keras.mixed_precision.LossScaleOptimizer. - Gradient Accumulation: Manually accumulate gradients using

tape.gradient()across iterations before applying updates.

Protocol 3.3: Distributed Multi-GPU Training for Hyperparameter Sweeps

Objective: To utilize multiple GPUs for parallelized hyperparameter optimization or training ensemble surrogate models, essential for robust uncertainty quantification in PNS predictions.

Materials: Server with 2-8 NVIDIA GPUs interconnected with NVLink (preferred).

Procedure for PyTorch (DistributedDataParallel - DDP):

- Initialize Process Group: At start of training script:

torch.distributed.init_process_group(backend='nccl'). - Wrap Model:

model = DDP(model.to(device), device_ids=[rank]). - Partition Data: Use

DistributedSamplerwith theDataLoaderto ensure unique data subsets per GPU. - Launch Script: Use

torchrun --nproc_per_node=N_GPUs train_script.py.

Visualization of Workflows

Workflow for GPU-Accelerated PNS Surrogate Modeling

Mixed Precision Training Loop with Gradient Accumulation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Reagents for GPU-Accelerated Surrogate Model Training

| Item/Category | Function in PNS Surrogate Research | Example/Note |

|---|---|---|

| NVIDIA CUDA Toolkit | Provides core libraries and compiler for GPU-accelerated computations. | Required for any custom CUDA kernel extensions in PyTorch/TF. |

| NVIDIA cuDNN & cuBLAS | GPU-accelerated primitives for deep neural networks and linear algebra. | Automatically used by frameworks; ensure version compatibility. |

| PyTorch/TensorFlow with AMP | Core frameworks enabling automatic mixed precision training for 2-3x speedup on Tensor Cores. | Use torch.autocast or tf.keras.mixed_precision. |

| NVLink & NVSwitch | High-bandwidth GPU-to-GPU interconnect for efficient multi-GPU scaling. | Critical for large model parallelism in DDP strategies. |

| Weights & Biases / MLflow | Experiment tracking and hyperparameter logging for systematic sweeps across stimulation parameters. | Enables reproducibility and comparison of surrogate model variants. |

| High-Fidelity Simulator | "Ground truth" generator for training data. | e.g., NEURON with extracellular stimulation, Sim4Life. Outputs are training targets. |

| Custom DataLoader | Efficient pipeline for loading and augmenting (parameter, simulation result) pairs. | Minimizes GPU idle time by prefetching data. |

| HPC Cluster/Scheduler | Manages resource allocation for long-running hyperparameter searches or large-scale data generation. | e.g., SLURM, with GPU node partitions. |

This application note details methodologies for integrating high-fidelity biophysical nerve fiber models into GPU-accelerated surrogate modeling workflows for peripheral nerve stimulation (PNS) research. The core objective is to enhance the biophysical realism of rapid, simulation-driven prediction tools used in therapeutic and safety applications, such as drug discovery and medical device optimization.

Key Nerve Fiber Models: Quantitative Comparison

The McIntyre-Richardson-Grill (MRG) and Sundt-Espinal-Nicholson-Nucleus (SENN) models represent gold standards for myelinated and specific sensory axon modeling, respectively. Their quantitative parameters are summarized below.

Table 1: Core Biophysical Parameters of Key Nerve Fiber Models

| Parameter | MRG Model (Myelinated, 10-16 µm) | SENN Model (Myelinated, Aβ Sensory) | Simplified Hodgkin-Huxley (Typical Surrogate Baseline) |

|---|---|---|---|

| Diameter Range | 5.7 - 16.0 µm | 6.0 - 14.0 µm | N/A (Point Neuron) |

| Number of Compartments | ~1000+ (detailed internode, paranode, node) | ~200-400 (optimized for sensory afferents) | 1 |

| Ion Channel Types | Fast Na⁺, Persistent Na⁺, Slow K⁺, Leak | Fast Na⁺, Persistent Na⁺, Slow K⁺, Leak, specific sensory transduction currents | Fast Na⁺, K⁺, Leak |

| Simulation Time (Real-time Factor, CPU) | ~10-100x slower than real-time | ~5-50x slower than real-time | ~100-1000x faster than real-time |

| Primary Application in PNS | Motor axon activation, threshold prediction | Sensory axon response, paresthesia mapping | Network-level feasibility studies |

Experimental Protocols for Integration & Validation

Protocol 3.1: Generating Training Data from Biophysical Models

Objective: To produce a high-quality dataset for surrogate model training by sampling the input parameter space and running full-scale biophysical simulations.

- Define Parameter Space: Identify key independent variables (e.g., axon diameter (5-16 µm), stimulus amplitude (0.1-10.0 V/m), pulse width (10-1000 µs), distance from electrode (0.5-10 mm)).

- Design Sampling Strategy: Use Latin Hypercube Sampling (LHS) to efficiently cover the high-dimensional parameter space with 10,000 - 1,000,000 sample points.

- Automate Simulation Batch: Scripted execution of NEURON or CoreNEURON simulations using the MRG/SENN model for each parameter set. Record output metrics: activation threshold, conduction velocity, membrane potential time series at key nodes.

- Data Curation: Store inputs (parameters) and outputs (metrics) in a structured HDF5 or NumPy array format. Partition into training (70%), validation (15%), and test (15%) sets.

Protocol 3.2: Constructing & Training the GPU-Accelerated Surrogate

Objective: To build a neural network-based surrogate that maps stimulation parameters to axon responses, trained on data from Protocol 3.1.

- Architecture Selection: Implement a deep fully-connected network or a convolutional network for time-series output. Use frameworks like PyTorch or TensorFlow with CUDA support.

- Model Definition: Example architecture: Input layer (parameters) → 5 hidden layers (256-512 units each, ReLU activation) → Output layer (threshold value or potential trace).

- GPU-Accelerated Training: Train using Adam optimizer (learning rate: 1e-4) with Mean Squared Error loss. Employ mini-batch processing (batch size: 256-1024) on NVIDIA A100/V100 GPUs. Use validation set for early stopping.

- Benchmarking: Compare surrogate predictions against held-out test set from biophysical model. Target performance: mean absolute error < 2% of threshold range, inference speed > 10,000 predictions/second.

Protocol 3.3: Validating Surrogate Predictions in a Functional Context

Objective: To validate the integrated surrogate in a realistic application scenario, such as predicting nerve recruitment in a multi-axon bundle.

- Construct Fascicle Model: Define a fascicle containing 100-1000 axons with realistic diameter distributions and spatial positions.

- Define Stimulation Scenario: Model a cuff or point electrode geometry. Calculate the electric field distribution using a finite element method (FEM) solver for a given stimulus.

- Run Batch Prediction: For each axon in the bundle, extract its specific parameters (diameter, position) and the local E-field. Use the trained GPU surrogate to predict its activation status.

- Output Analysis: Generate a recruitment curve (% axons activated vs. stimulus amplitude). Compare the curve and computational time against a full biophysical simulation of the same bundle.

Visualization of Workflows

Title: GPU Surrogate Integration Workflow

Title: Surrogate Validation in Fascicle Model

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Integration

| Item | Function/Description | Example/Supplier |

|---|---|---|

| NEURON Simulation Environment | Primary platform for running MRG, SENN, and other biophysical models. Enables detailed compartmental simulations. | https://neuron.yale.edu |

| CoreNEURON | Optimized simulation engine for GPU/CPU, dramatically speeding up batch execution of NEURON models. | https://github.com/BlueBrain/CoreNEURON |

| PyTorch / TensorFlow | Deep learning frameworks with GPU support for constructing, training, and deploying the neural network surrogate. | PyTorch: https://pytorch.org |

| NVIDIA CUDA Toolkit | Essential API and libraries for GPU-accelerated computing. Required for both CoreNEURON and deep learning training. | https://developer.nvidia.com/cuda-toolkit |

| HDF5 Data Format | Hierarchical data format ideal for storing and managing large, complex simulation datasets for training. | https://www.hdfgroup.org/solutions/hdf5/ |

| Latin Hypercube Sampling (LHS) Library | Python library (e.g., SMT, pyDOE) for generating efficient, space-filling parameter samples. |

SMT: https://github.com/SMTorg/smt |

| Mesh Generation & FEM Tool | Software for defining electrode geometries and calculating electric fields (e.g., COMSOL, SCIRun, FEniCS). | COMSOL Multiphysics |

| High-Performance Computing (HPC) Cluster or Cloud GPU Instance | Necessary computational resource for large-scale batch simulations and deep learning training. | AWS EC2 (P3/P4 instances), NVIDIA DGX systems, local HPC. |

This application note details protocols for integrating GPU-accelerated surrogate models for Peripheral Nerve Stimulation (PNS) prediction into medical device development and safety screening pipelines. The deployment of these machine learning models transforms in silico research tools into validated components for regulatory-grade design iteration and risk assessment.

Model Deployment Architecture

Core System Components

The deployment ecosystem consists of three interconnected layers:

Table 1: Deployment Stack Components

| Layer | Component | Function | Technology Example |

|---|---|---|---|

| Serving | Inference API | Hosts model; processes prediction requests. | TensorFlow Serving, NVIDIA Triton |

| Orchestration | Workflow Manager | Automates screening pipelines & device design loops. | Nextflow, Apache Airflow |

| Integration | CAD/Simulation Link | Bridges electromagnetic simulation software with the model. | COMSOL LiveLink, Custom Python API |

Key Integration Protocols

Protocol 2.1: Model Containerization for Reproducible Inference

- Package the trained surrogate model (e.g., a convolutional neural network for field-to-PNS prediction) and its dependencies into a Docker container.

- Include preprocessing scripts that transform raw electromagnetic field simulation outputs into the model's required input tensor format.

- Expose a REST/gRPC API endpoint using a framework like FastAPI.

- Deploy the container to a Kubernetes cluster or cloud instance, enabling scalable, on-demand inference.

Protocol 2.2: Embedding Model in Device Design Loop

- Simulation: Run a finite-element method (FEM) simulation of a new device coil geometry in software (e.g., SIM4LIFE, COMSOL).

- Field Extraction: Automatically extract the resulting 3D E-field distribution for the region of interest.

- Prediction: Send the field data to the surrogate model via API, receiving a PNS threshold estimate (e.g., stimulation strength over time) in milliseconds.

- Design Adjustment: The result informs the next design iteration (e.g., coil winding adjustment) before proceeding to costly physical prototyping.

Safety Screening Pipeline Protocol

This protocol outlines a standardized workflow for using the deployed model to screen novel device configurations for PNS risk.

Protocol 3.1: Automated Batch Safety Screening

- Objective: To evaluate a batch of

Nproposed device operating points (varying frequency, amplitude, pulse shape) for PNS risk. - Input: A CSV manifest file listing parameter sets for each device configuration.

- Workflow:

- Parameter Parsing: The pipeline ingests the manifest file.

- Simulation Generation: For each parameter set, an automated script generates and submits a corresponding electromagnetic simulation job to an HPC cluster.

- Result Monitoring & Trigger: Upon simulation completion, the pipeline detects output files and triggers the inference step.

- Model Inference: The E-field results are sent to the deployed surrogate model.

- Risk Classification: Model predictions are compared against a pre-defined PNS safety threshold (e.g.,

PNS Metric < 0.8). - Report Generation: A comprehensive report flags high-risk configurations and logs all predictions.

Diagram Title: Automated Batch Safety Screening Workflow

Validation & Benchmarking Data

Deployment requires rigorous validation against gold-standard, computationally intensive FEM solvers.

Table 2: Surrogate Model Performance vs. Full Simulation

| Validation Metric | Full FEM Simulation | GPU-Accelerated Surrogate Model | Speed-up Factor |

|---|---|---|---|

| Runtime per Design | 4.5 - 6.2 hours | 8 - 12 seconds | ~2000x |

| PNS Threshold Prediction Error | (Ground Truth) | Mean Absolute Error: ≤ 3.1% | N/A |

| Hardware Utilization | CPU Cluster (High) | Single NVIDIA A100 GPU | >90% GPU utilization |

Protocol 4.1: Continuous Validation Benchmarking

- Maintain a curated set of 50-100 validated device simulation cases as a ground-truth benchmark.

- During model deployment updates, automatically run inference on the benchmark set.

- Compare predictions to ground truth, ensuring error metrics (MAE, RMSE) remain within acceptable tolerances.

- Log performance drift and trigger model retraining alerts if thresholds are breached.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for PNS Surrogate Model Deployment

| Item / Solution | Function in Deployment | Example / Note |

|---|---|---|

| NVIDIA Triton Inference Server | Optimized serving of multiple ML models with GPU acceleration. | Supports TensorRT, PyTorch, TensorFlow backends. |

| SIM4LIFE / COMSOL with API | Electromagnetic simulation platform enabling automated simulation scripting. | Required for generating the input field data for the model. |

| Nextflow | Orchestrates complex, multi-step screening pipelines across heterogeneous compute environments. | Manages transitions from simulation to inference to reporting. |

| Docker / Singularity | Containerization ensures model runtime environment consistency from development to production. | Critical for reproducibility on HPC and cloud systems. |

| Prometheus & Grafana | Monitoring stack for tracking API latency, GPU utilization, and prediction throughput. | Essential for maintaining SLA in production pipelines. |

| Digital Phantom Libraries | Standardized anatomical models (e.g., "Duke", "Ella" from IT'IS) used in simulations. | Ensures consistent, comparable PNS evaluation across studies. |

Integrated Design-Safety Pipeline

The final deployment integrates device design and safety assessment into a continuous loop.

Diagram Title: Integrated Device Design and Safety Screening Loop

Overcoming Implementation Hurdles: Strategies for Robust and Efficient PNS Surrogates

Within the thesis on GPU-accelerated surrogate models for peripheral nerve stimulation (PNS) research, a primary bottleneck is the scarcity of high-fidelity, multi-scale biological datasets. Acquiring comprehensive in vivo or in vitro electrophysiological and morphological data for human peripheral nerves is ethically challenging, technically complex, and low-throughput. This data scarcity impedes the training of robust, generalizable deep learning models that predict neural recruitment or drug-modulated responses. Transfer Learning (TL) and Data Augmentation (DA) are critical methodologies to overcome this limitation, leveraging existing large-scale datasets and artificially expanding small, domain-specific datasets to train accurate surrogate models on high-performance computing (HPC) clusters.

Core Techniques & Application Notes

Transfer Learning Protocols

TL re-purposes models pre-trained on large, source datasets (e.g., ImageNet, public electrophysiology repositories) for our target PNS tasks with limited data.

Protocol 2.1.1: Feature Extraction & Fine-Tuning for Convolutional Neural Networks (CNNs)

- Objective: Adapt a CNN pre-trained on general image data to analyze histological nerve cross-section images for automated fascicle segmentation.

- Pre-trained Model: ResNet-50 (weights from ImageNet).

- Procedure:

- Base Model Loading: Load ResNet-50, removing the final fully connected (classification) layer.

- Feature Extraction Phase: Freeze all convolutional base layers. Add new, randomly initialized task-specific layers (e.g., a U-Net-like decoder for segmentation). Train only the new layers on the target PNS image dataset for 50 epochs using Adam optimizer (lr=1e-3).

- Fine-Tuning Phase: Unfreeze the top

Nlayers (e.g., the last 20% of the base model). Jointly train the unfrozen base layers and the new layers at a lower learning rate (lr=1e-5) for an additional 30 epochs to subtly adapt relevant features. - Regularization: Employ heavy dropout (0.5) and L2 regularization in the new layers to prevent overfitting.

- GPU Acceleration Note: Utilize mixed-precision training (TensorFloat-32/FP16) on modern GPUs (NVIDIA A100/V100) to speed up both phases by 1.5-3x.

Protocol 2.1.2: Domain-Adversarial Training for Electrophysiology Signal Analysis

- Objective: Adapt a model trained on synthetic or rodent electrophysiology data to analyze human nerve recordings, mitigating domain shift.

- Method: Implement a Domain-Adversarial Neural Network (DANN) architecture.

- Workflow Diagram:

Title: Domain-Adversarial Training Workflow for PNS Signals (Max 760px)

Data Augmentation Protocols

DA generates synthetic training data through label-preserving transformations, crucial for augmenting small experimental PNS datasets.

Protocol 2.2.1: Physics-Informed Augmentation for Computational Models

- Objective: Augment training data for a surrogate model that predicts axon activation thresholds based on finite element method (FEM) electric fields.

- Procedure:

- Parameter Space Sampling: Define ranges for key biophysical parameters (e.g., axon diameter ±30%, membrane resistivity ±20%, fascicle permittivity ±15%).

- Synthetic Generation: Use the original FEM model to generate new electric field distributions by perturbing these parameters via Latin Hypercube Sampling.

- Label Calculation: Compute the new activation thresholds for each perturbed configuration using the GPU-accelerated biophysical simulator (e.g., NEURON with CoreNEURON).

- Table 1: Augmentation Parameters for PNS FEM Models